This article is more than 1 year old

AMD takes on Intel in 'the internet of things'

From refrigerators to one-armed bandits

AMD took direct aim at Intel's low-power Atom embedded processor and platform on Wednesday with the release of its G-Series Fusion APUs (accelerated processing units), the follow-ons to AMD's recently released C-Series and E-Series APUs for the notebook, netbook, and tablet markets.

"AMD's commitment is to ensure the game-changing technologies we develop for consumers and the enterprise are also available for the vast and growing embedded market," said Patrick Patla, general manager of AMD's server and embedded division, when announcing the G-Series debut.

Vast and growing is no exaggeration. The buzzphrase du jour, "the internet of things", refers to how everything from TVs to toasters are rapidly finding their way online – and embedded processors are providing the smarts for that transition.

Like the C-Series and E-Series APUs, AMD's G-Series is a mashup of the company's new Bobcat x86 compute core with a Radeon-based GPU core, both on the same piece of silicon.

All three APUs offer DirectX 11 support – lacking in Intel's latest Sandy Bridge processor line – plus support for OpenGL 4.0 and OpenCL, and hardware decode support for H.264, VC-1, MPEG2, WMV, DivX, and Adobe Flash.

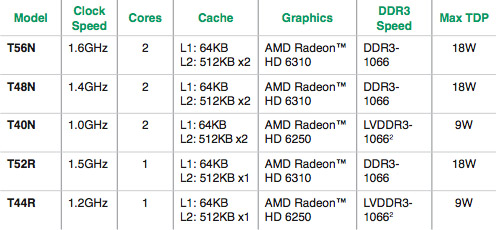

You can see a full list of the G-Series capabilities by taking a gander at its product brief, but a summary of the five parts that comprise the series is as follows:

According to AMD, the G-Series is targeted at "embedded applications such as Digital Signage, x86 Set-Top-Box (xSTB), IP-TV, Thin Client, Information Kiosk, Point-of-Sale, and Casino Gaming" – all of which can be upstanding citizens in the internet of things.

AMD also trumpeted a host of design wins in its announcement, including companies such as Advansus, Compulab, Congatec, Fujitsu, Haier, iEi, Kontron, Mitec, Quixant, Sintrones, Starnet, WebDT, Wyse, "and many others."

Some of those companies may not be household names, but there's a good chance that one of them supplies the innards to something you use on a regular basis. A Sintrones V-BOX for example, might have been aboard the bus or train that took you to work this morning, or a Kontron or Quixant controller may have powered the one-armed bandits that entertained attendees at the recent Las Vegas Consumer Electronic Show during their off hours. ®