This article is more than 1 year old

IBM's mainframe-blade hybrid to do Windows

Tighter server coupling coming?

It's the network, stupid

It is pretty easy to draw some pretty pictures and to network blade servers to rack servers and put some switches between them to link them together. Data centers have been doing this for decades, after all.

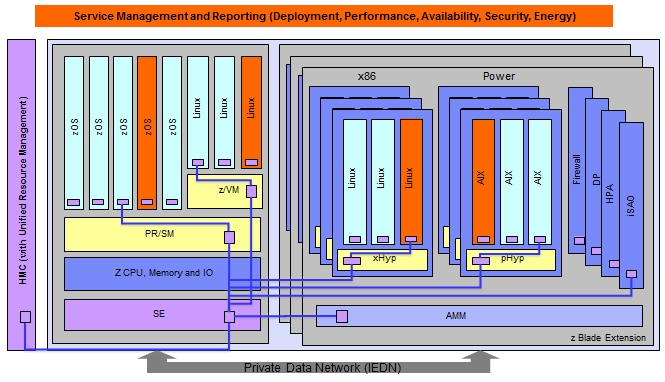

The secret sauce to the zEnterprise 196-zBX hybrid is not just networking, but the way that it is networked, says Frey. With the setup IBM has created, the hypervisors on the Power and Xeon blades are treated just like system firmware on the mainframe, just like the PR/SM hypervisor. So PR/SM, PowerVM, and RHEV are all treated like firmware on the mainframe and are all linked back to the mainframe and to the Unified Resource Manager tool on the mainframe by a point-to-point Gigabit Ethernet network that's implemented in a switch that has long since been buried in the bulk power hub in the System z mainframe.

This switch hooks into the Advanced Management Module in the BladeCenter chassis, and the Unified Resource Management tool uses SNMP interfaces to manage the BladeCenter hardware and has hooks into the PowerVM and KVM hypervisors to manage virtual machine partitions on the Power and Xeon blades.

Here's what the management networks look like schematically:

As the schematic shows, this internal network is used to manage the deployment, performance monitoring, availability, security, and energy management aspects of the virtualized servers in the hybrid box.

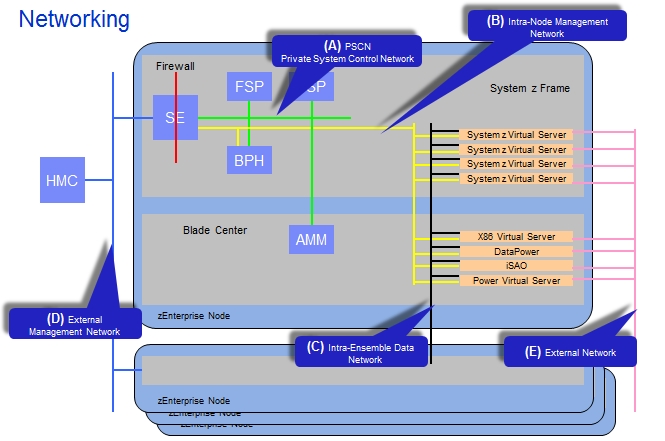

Here's a more detailed view of the internal and external networks in the zEnterprise-zBX ensemble:

Network B in the picture is the Intra-Node Management Network, and it has redundant switches from Blade Network Technologies (recently acquired by IBM) in the BladeCenter chassis that link back into the Ethernet switch in the System z frame. This network does not have any encryption on it because it is not connected to the outside world.

In the above chart, FSP is short for field service processor, which implements the Network A, the Private System Control Network. The SE in the chart is short for Support Element, and it is a mirrored set of laptops that lets mainframe admins get access to the mainframe firmware. The HMC is short for Hardware Management Controller, and this is used to manage multiple mainframe systems. (In the Power line, the HMC manages PowerVM hypervisors, and this is not the same device despite having the same name.)

The old mainframe HMCs were essentially stateless, according to Frey, but with the zEnterprise-zBX hybrid the HMCs are more stateful, having knowledge about the conditions on the nodes and ensembles in the clusters. In fact, the Unified Resource Manager software runs piecemeal on the HMC, the SE, and in other elements like the AMM and hypervisors.

Network C in the schematic is a customer-accessible network called the Intra-Ensemble Data Network, a flat Layer 2 network that links all of the virtualized servers in the mainframe, Power, and Xeon servers together, and is based on top-of-rack 10 Gigabit Ethernet switches from Juniper Networks. This network does not have any Fibre Channel over Ethernet (FCoE) support for converging server and storage networks, but IBM is prepping such support for the next generation of the System z-BladeCenter hybrid.

Network D is an external management network that links the HMC to the SEs in multiple System z mainframes in an ensemble, and Network E links the server slices to the outside world where they can get orders to process work.

Now, I know what you are thinking: 'Why didn't IBM use InfiniBand instead 10 GE switches for the Intra-Ensemble Data Network and external network?' InfiniBand has lower latency, higher bandwidth, and also offers Remote Direct Memory Access (RDMA) support, which is where the low latency comes from. RDMA lets a server reach out over InfiniBand and go directly into the main memory of another server node linked to it by InfiniBand, rather than having to pass requests for data up through the TCP/IP and OS stacks, over the network, down into another machine's stack and back again.

Frey was a bit cagey about this, but said that using InfiniBand for the C and E networks would not have obviated the need for 10 Gigabit Ethernet, so it was just easier to pick one network and ride it. And with 10 Gigabit Ethernet supporting the RDMA over Converged Ethernet (RoCE) protocol, which was announced in April 2010, IBM can do RDMA in a future release of the product, should it decide to.

Frey would not comment on future plans, but did say that IBM was interested in RDMA, not InfiniBand, per se. (IBM's PureScale Power-DB2 database cluster uses RDMA with Voltaire InfiniBand switches to tightly couple database nodes together and let them quickly share data.)

Frey did confirm that a future zEnterprise-zBX system would have link aggregation, allowing for more than 10GB of bandwidth to be pumped down into any single blade. He added that IBM was also working on ways to converge storage into the cluster and do a better job of virtualizing that storage, possibly including adding IBM's SAN Volume Controller for thin provisioning, copy services, and other features. Blades will also be able to link to mainframe file systems in the next release.

IBM has not said how many System zEnterprise 196 customers it has, and it has not said how many have opted for the zBX hybrid. Conti reminded us that insurance giant Swiss Re was the first buyer of the new mainframe, buying two fully loaded zEnterprise 196 machines last September, each with 20,590 MIPS dedicated to z/OS workloads, ten Linux engines, and extra specialty engines for boosting Java performance, called System z Application Assist Processors, or zAAPs. Swiss Re is also going to implement the zBX blades at some point.

Conti said that IBM had "a bunch" of zBX shipments in the fourth quarter, without being precise about the number,and added that Big Blue expected the zBX sale to take a while. Most customers will upgrade to the new mainframes first, then consider the benefits of integrated blade servers being managed by the mainframe. ®