This article is more than 1 year old

Numascale brings big iron SMP to the masses

The NUMA NUMA song

Like a Dolphin

So, the entry card has 2 GB of write-back cache and 1 GB of tag memory that allows for the use of server nodes in the cluster with 16 GB of total main memory each; the midrange card has 4 GB of writeback cache and 2 GB of tag cache to support 48 GB of main memory per node; and the high-end card has 4 GB of writeback cache and 4 GB of tag cache to support 64 GB of main memory per node.

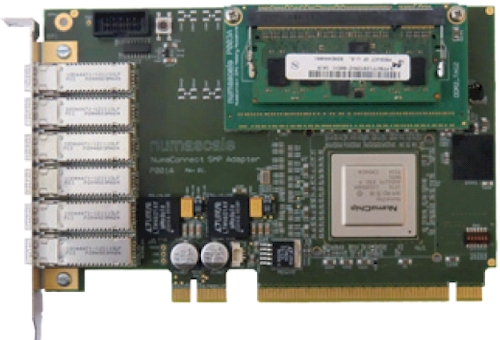

The NumaConnect SMP card: NUMA for the masses

The NumaConnect SMP adapter card that is based on the single-chip implementation of the Dolphin SCI technology implements a 48-bit physical address, which means it can see as much as 256 TB of memory as a single space, which works out to 4,096 server nodes with 64 GB each. Rustad says that the servers can be linked in a number of different configurations using the six NUMA ports on the card, including a giant ring or a 2D or 3D torus interconnect popularly used in parallel supercomputer clusters.

The latter two are interesting in that the NumaConnect cards can create a shared memory cluster for workloads than need a big memory space, but also can run applications using the OpenMP implementation of the Message Passing Interface (MPI) distributed clustering protocol for applications. Obviously, the SCI implementation does not have the same bandwidth as QDR InfiniBand, but the Numascale technology allows you to use a cheap cluster with shared or distributed memory, whatever the application of the moment needs.

The software-based vSMP Foundation clustering technology from ScaleMP, which was just recently upgraded to version 3.5, works in much the same manner but used no chips (it is implemented in low-level systems software and uses InfiniBand interconnect and the RDMA protocol as a transport). But the difference with Numascale is that its hardware-based solution supports Windows, Linux, or Solaris, while ScaleMP only supports Linux. Conversely, ScaleMP runs on any x64-based server you have, while the Numascale adapter is restricted to a fairly tight selection of Opteron-based machines that have HyperTransport HTX expansion slots.

For the moment, at least. Rustad says that there is no reason why the NumaChip could not be implemented to fit into an Opteron or Xeon socket, with a connector coming out of the chip in the socket to link it to the NUMA six ports on the card. By the way, plugging even if you use an HTX-based machine, plugging in the NumaChip eats a socket's worth of memory space in the box, so you are going to lose a processor socket no matter what you do. Obviously, for certain workloads, building a cluster based on two-socket Opteron machines is not going to offer the best memory expansion, and it may even offer worse bang for the buck per node because the nodes are CPU challenged.

The entry NumaConnect adapter card costs $1,750, while the high-end card costs $2,500. Rustad says that Numascale has 100 cards in stock now and will get another batch in February. At the moment, the company has tested the chips using a twelve-node cluster running Debian Linux and has not yet tested Windows or Solaris. The NUMA links between nodes are based on DensiShield I/O cables from FCI, which put eight pairs of wires (four bi-dictional links) into a square connector. FCI makes cables for just about any protocol you can think of.

IBM's Microelectronics Division is fabbing the NumaChip ASICs for Numascale. ®