This article is more than 1 year old

Intel Sandy Bridge many-core secret sauce

One ring to rule them all

IDF During the coming-out party for Intel's Sandy Bridge microarchitecture at Chipzilla's developer shindig in San Francisco this week, two magic words were repeatedly invoked in tech session after tech session: "modular" and "scalable". Key to those Holy Grails of architectural flexibility is the architecture's ring interconnect.

"We have a very modular architecture," said senior principal engineer Opher Kahn at one session. "This ring architecture is laid out in such a way that we can easily add and remove cores as necessary. The graphics can also have different versions."

How many cores was Kahn talking about? At another session he referred to "some future implementation with 10 cores or 16 cores." And although all of the materials presented at the conference referenced a four-core Sandy Bridge implementation, Kahn also referred at one point to a "two core product."

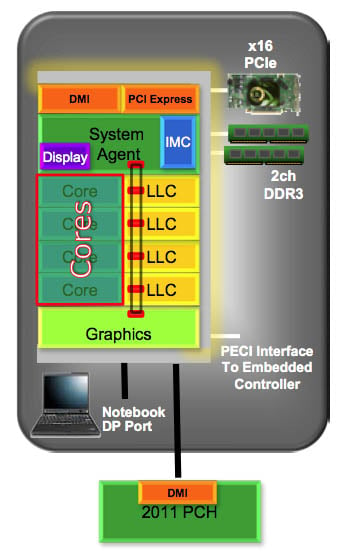

The Sandy Bridge ring interconnect manages how the various and sundry parts of the processor communicate with one another: the compute cores with one shared "cache box" per core, the graphics subsystem, and finally the "system agent" — the chip area that includes such niceties as PCIe, direct media interface (DMI), integrated memory controller (IMC), display engine, and power management.

Here, the ring interconnect is the loop that looks like a train track

"The cores talk to the ring directly, they don't talk to any other element," said Kahn. "The graphics talks to the ring, the ring talks to the cache box, and the cache box talks to the system agent. And in some cases they can talk to each other, but all communication is done over the ring."

The ring interconnect was needed due to a number of factors, not the least of which being the fact that Sandy Bridge crams so many different functions onto one piece of silicon.

"In the previous generation we really had a multi-chip package, with a separate CPU — a more traditional CPU that looked a little bit like Merom and Conroe family, the Nehalem — with cores and a last-level cache," Kahn said — in Intel's latest parlance, by the way, what previously was often referred to as an L3 cache is now known as a last-level cache, or LLC for short.

Connected to that CPU in the same package was a second chip with integrated graphics, memory control, PCIe, and more. For Sandy Bridge, "We basically dropped all that and integrated everything into one piece of silicon," he said.

The development of the ring interconnect started a relatively clean sheet of paper. "We really started from scratch," said Kahn. "Everything that connects the cores, connects to the system, memory controller — everything was redesigned for Sandy Bridge from scratch."

Not that a ring interconnect is brand new in Sandy Bridge. "The ring architecture as a concept actually started in Nehalem-EX," said Kahn, "which is an eight-core server. They needed that bandwidth for server; we actually figured out that we need similar bandwidth and similar behavior in the client space.

And the bandwidth that the ring interconnect provides is impressive. "Our bandwidth provided by this ring for each element connected to the ring connect gives 96 gigabytes per second ... if you're talking about running at 3GHz," Kahn said. "The multi-bank last-level cache for a four-core product provides upwards of 380 gigabytes per second. This is 4X of what existed in previous generations — even the two-core product is 190 gigbytes per second."

A four-fold increase in bandwidth "is really a necessity for the graphics," he said. "Probably higher than or close to what all four cores need together can be consumed by the graphics.