This article is more than 1 year old

HP spreads wings with 'butterfly' data centers

Modules take flight

So long, raised floors

According to Peter Gross, vice president and general manager of Critical Facilities Services at HP, the Flexible DC modular data center does not support raised floors like data centers of the old mainframe, water-cooled era. But HP and its partners for the product are offering a variety of different cooling options for the butterfly designs, suitable to whatever climate the data center is in.

These include exterior air handlers that let outside air into the data center through the side walls, lets it get sucked through equipment racks (after being filtered), and sucked out through the roof; this outside air cooling option has HVAC direct extension (DX) backup for when the weather gets hot. You can seal the butterfly data center up and use normal air or evaporative cooling, if you want. (There is no chilled water option.)

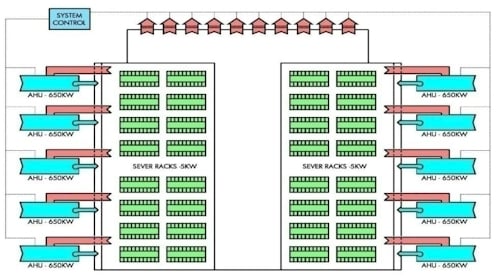

The M8 module can hold eight rows of server racks, as you can see here:

The Flexible DC also assumes customers are going to want to eliminate as much stepping down and transformation of the juice coming in from the power company as possible to cut down on power handling equipment and to save energy. This isn't required, of course, but it is highly recommended.

If you need more than 24,000 square feet of data center floor with 3.2 megawatts of power and cooling capacity, HP suggests that you cookie cutter the Flexible DCs and build a campus of them. Thus:

If you look at the geometry of the butterfly data centers, you might think you could interlock long rows of them together to conserve space, but the hallways do not line up that way and you would be blocking wall space that might be dedicated to air intake, power conditioning, and generators.

Gross says that a butterfly data center has a power usage effectiveness (PUE) rating of 1.25 or lower, depending on the options customers select and the climate it is plopped into. That is, according to the Environmental Protection Agency, as cited by Google, somewhere between "best practices" and "state-of-the-art" in terms of energy efficiency. (PUE is the total power pumped into the data center for cooling and IT gear divided by the power consumed by the IT gear). Google's own PUE, as you can see, ranges from about 1.15 to 1.25.

While there is plenty of talk about PUE and density in data center circles, Gross says that is not the real problem. The cost of the data center facility is. Gross estimates that a modern brick-and-mortar data center costs about $25m per megawatt. But by standardizing components in the prefabricated data centers – and by building and testing them back in a factory instead of custom designing each data center and custom building it – Gross says that HP and its partners with the Flexible DC product will be able to put a data center into the field for one half to one third that cost. And instead of taking two years to build it, HP will be able to do it in six to nine months.

"Today, a high-end data center costs $25m per megawatt to build, and this is not sustainable," says Gross. "And this is why co-location has been growing like crazy, because people are trying to avoid these costs. Demand for data center capacity is strong, but supply is not there because of the high cost."

Gross says that HP was originally just thinking of pitching the Flexible DC product to cloud, search, and other hyperscale Web companies that are looking at shelling out $200m to $300m to build their next data centers. But instead, the company designed an offering that would be appealing to a lot more customers.

Gross estimates that there are about 12,000 data centers worldwide with 20,000 square feet or more of floor space, and that many of them are very inefficient – so inefficient that companies will have little choice but to replace them. But at $25m per megawatt, and rising, every year it looks too expensive and they make do. The mass production of data centers that HP is talking about providing – call it an Industry Standard Data Center, akin to an x64 server – could break the logjam and get a lot more data centers built.

Don't expect the local construction workers to be happy about it.

Gross says that the Flexible DC product is in its advanced design stage now and will be ready to sell to customers in the next three to six months. HP has three different partners it is in discussion with to help with the construction, power, and cooling, but Gross would not name names. Customers who want to start specing out a butterfly data center can contact HP starting today and get moving. HP plans to sell the butterfly data centers on a global basis, not just in North America. ®