This article is more than 1 year old

Oracle tunes Solaris for Intel's big Xeons

Eight-socket box catching up Sparc

Word is trickling out of Oracle that is recently acquired Solaris has been heavily tuned to support Intel's new eight-core Nehalem-EX Xeon 7500 beasties.

Pity, then, Oracle does not yet seem to have a Nehalem-EX box in the field. But the indications are that Oracle is working on an eight-socket box.

First, said eight-socket box. In a briefing with El Reg, Shannon Poulin, director of Xeon platform marketing at Intel, flashed a foil that showed all the vendors making Nehalem-EX servers by socket count and form factor. Oracle was not among the group making two-socket blade and rack servers using the Xeon 7500 and its HPC variant, the Xeon 6500 - which is only available for two-socket machines. Nor was Oracle among another group of server peers who admit they are making four-socket machines using the Xeon 7500.

Oracle was, though, among the vendors named in the presentation that were working on machines with eight or more sockets.

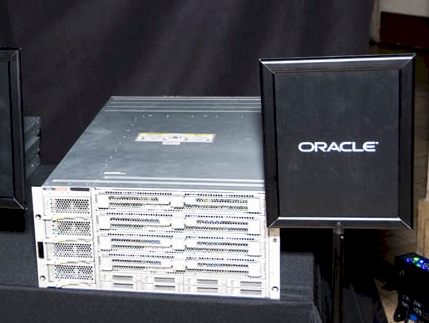

And then there is the photographic evidence below - compliments of our very own Rik Myslewski, who spotted an Oracle Nehalem-EX in the wild at the launch event Intel hosted in San Francisco.

This don't look like a four-socket box, does it?

If that is indeed an eight-socket Sun Fire server, then it looks like Oracle is taking the uniboard design it used with the Sparc servers and then applied to Opteron machines and using it on Nehalem-EX machines.

The server sure looks like eight separate system boards that slide into the chassis horizontally, with eight disk drivers underneath and four power supplies off to the side. If that is not a Nehalem-EX machine, it could be an 8U chassis that is cramming in eight two-socket servers on cookie sheet trays. Intel did not bring out any machinery as part of its dog and pony, so I could not peek into this box and see what it had inside.

In the absence of shipping Nehalem-EX iron, Oracle is talking up how OpenSolaris, the development version of Solaris, has been tweaked so it can support some of the new features in the latest Xeon chips from Intel. Scott Davenport, one of the developers responsible for OpenSolaris at Oracle, said in a blog that he has been working since last summer with Intel's engineers to align OpenSolaris and Xeon chips, and that the hot plug CPU and memory features of the Unix variant now work with the Xeon 7500s.

The current Fujitsu-designed Sparc64-based Sparc Enterprise M servers support hot plug CPU and memory cards already running Solaris 10, and Sun itself has supported hot plugging of these features since the UltraSparc-III systems nearly a decade ago. Support could be even older than that, with the Starfire E10000 high-end servers. If so, add it to the comments at the end.

Just as the Nehalem-EX chips were being readied for market, Oracle put out a whitepaper outlining the optimizations that the OpenSolaris and Solaris team have made for the Westmere-EP Xeon 5600s and the Xeon 7500s.

Oracle didn't say anything precise about its own Xeon 7500 iron in that report, but did say that on a Java server-side benchmark test, it was able to show near linear scalability in moving from a Xeon 7500 server with four sockets to one with eight sockets. Ditto for an unnamed CPU-intensive workload.

Oracle added that the four-socket Xeon 7500 machines showed about twice the performance as the current four-socket Xeon 7400 machines, and offered up to four times the memory bandwidth thanks to the shift to the benefits of QuickPath Interconnect over the old frontside bus used in the 7400s.

Oracle and Intel have worked on Solaris Fault Manager, which diagnoses hardware and software errors and offlines components that are faulty. Solaris FM now can function atop Xeon 5600 and 7500 processors, and the utility embedded in Solaris also can tap into the Machine Check Architecture (MCA) recovery feature that was added to the Xeon 7500s to recover from double-bit memory errors.

This was one of the big features Intel was going on about at the Nehalem-EX launch at the end of March. ®