This article is more than 1 year old

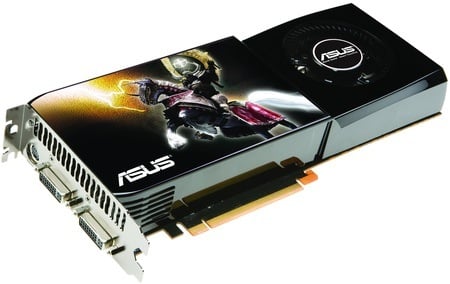

Asus ENGTX285 TOP overclocked graphics card

Nvidia's new GeForce GTX 285 makes its debut

Review Asus' ENGTX285 TOP graphics card is based around Nvidia’s latest graphics chip, the GeForce GTX 285. This is the GT200b core, which is a die-shrink from 65nm to 55nm of the GT200 that was the basis for last summer's big Nvidia release, the GeForce GTX 280.

Asus' ENGTX285 TOP: factory overclocked GTX 285

All of the features in the older chip have been carried over to the new one, so the GTX 285 still supports DirectX 10.0 and OpenGL 2.1, has 240 unified shaders, and memory support runs to GDDR 3 rather than the spiffy GDDR 5 that AMD uses in the Radeon HD 4870. Display processing is still managed by an NVIO2 core with support for twin DVI ports and HDMI. DisplayPort still isn’t included.

The smaller fabrication process has reduced the size of the GPU, bringing down the production cost and the power consumption. This has given Nvidia some leeway with the GTX 285's power envelope, and it has to chosen to increase the clock speeds while maintaining the same cooling parameters as the GTX 280. Lay a GTX 280 next to a GTX 285 and you won’t be able to tell the two cards apart as the hefty cooling packages look identical.

Surprisingly, Nvidia has been able to reduce the maximum power rating of the GTX 285 to such an extent that it has two six-pin PCI Express power connectors instead of the six-pin and eight-pin connector combo that we've seen in the past. This is a relatively minor change if you only plan on running a single graphics card, but anyone considering GTX 285 in SLI mode should be ecstatic as power supplies with four six-pin connectors are relatively commonplace.

A reference GTX 280 has feeds and speeds for the core, memory and shaders of, respectively, 600MHz, 2200MHz and 1300MHz. These step up to 648MHz, 2484MHz and 1476MHz with the GTX 285 which suggests a ten per cent increase in performance.

The Asus TOP is overclocked at the factory and runs core, memory and shaders at 670MHz, 2600MHz and 1550MHz. That, in turn, suggests that it should be four or five per cent faster than a stock GTX 285 and 15 per cent faster than a reference GTX 280. That is indeed what our test results show, but let’s not get ahead of ourselves.