This article is more than 1 year old

The Legacy Dilemma

Architecture giveth, vendors taketh away?

In IT circles, the term ‘legacy’ is generally banded about as a kind of shorthand, to suggest that a system or application has reached a certain point in its existence where the only way is down. There is no concrete definition – well there is if you browse the 'net, but it tends to reflect the above (for example, “a system which has been superseded but which is still in use”). Which isn’t really much help in system planning.

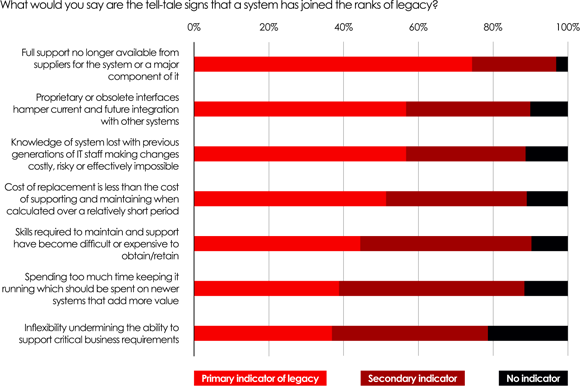

So, other than such bland generalisations, is it possible to characterise legacy and if so, how? To resolve this issue, who better to ask than the Reg readership. In fact, the answers we received to our poll last week were pretty telling. As we can see from Figure 1, the number one characteristic that could quickly turn mainstream into has-been was the question of vendor support. Hmm, does this suggest that IT environments would be better able to withstand the vagaries of change if only vendors allowed them? Well, yes, actually it does.

While none of the other characteristics were seen as irrelevant – in other words, they all have a part to play – it is worth calling out the lack-of-system-flexibility driver, which appears bottom of the list. Far more important than the flexibility of individual systems, and indeed ranking equally with the loss of skills over time, is the issue of integration between systems. This is fascinating stuff, if for no other reason than it validates the need to consider the IT environment as a whole, over and above treating individual systems.

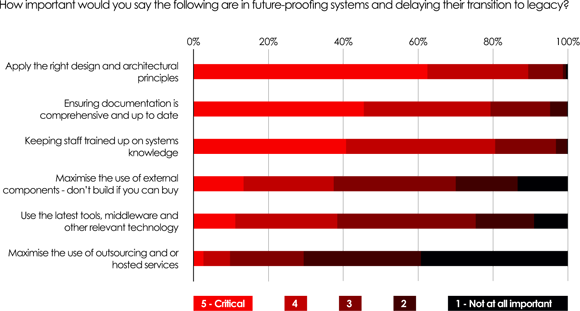

This priority is reflected when we consider what the 303 poll respondents considered as most important to protect against systems becoming legacy before their time. As we can see from Figure 2, having good architecture and design in mind is the number 1 criterion. Unfortunately, we know from other studies that this isn’t always treated as a high priority early in the design stage. However, at least it’s useful to know – findings such as these are a useful counter to anybody who suggesting they’ll worry about such things later.

Of course, architecture can’t protect against individual vendors withdrawing support. However, what we can do is extrapolate these findings to the IT environment as a whole. If integration is a key issue, and well-architected systems a key protection, it is not hard to see how such questions as open standards and interoperability become important when ensuring the future-safety of our systems and applications. Built-to-last IT is an oxymoron and nobody can prevent change from happening, but at least we can make savvy design decisions early on, which can extend the life of both individual systems and the whole infrastructure.