Original URL: https://www.theregister.com/2007/11/20/accelerators_fpga_gpu_sc07/

GPGPUs and FPGAs are now fully implanted in our brains

The server booster bonanza takes hold

Posted in Channel, 20th November 2007 08:07 GMT

SC07 One topic - even more so than cheap shrimp - dominated this year's Supercomputing conference in Reno: Accelerators.

The server and chip industries have felt the rise of the accelerator coming for some time. Last year's conference, for example, had the hardware heads pitted against the coders. The software set wondered if enough developers would ever exist to write custom code for tricky FPGAs and GPGPUs (general purpose graphics processors). The hardware crowd countered such skepticism by forcing pitches about their super silicon down the throats of anyone who showed even a faint sign of interest in the technology. Meanwhile, customers in the financial services, oil and gas and media markets hoped these two sides would work out their differences. They want dramatic performance gains, and they want them now.

Reno's monument to cheap shrimp

Much of the reticence around the server accelerators vanished at Supercomputing '07. Yes, the software folks still have their concerns. And, yes, grizzled veterans complain that they've seen accelerator fads come and go. Ultimately, however, it's clear to us that enough big vendors, ISVs, start-ups and customers have coalesced around the accelerator idea to push the technology forward in a profound way.

So, let's take a look at a number of the accelerator options out there from both the hardware and software sides and try to see where things stand.

ClearSpeed - The Floater

ClearSpeed is one of the more mature accelerator players in the server market, having specialized in speeding up floating point operations for some time. The start-up enjoys strong ties to companies such as HP and Sun Microsystems and has its accelerator cards sitting in a couple of the world's top supercomputers.

At Supercomputing, ClearSpeed rolled out a new, complete accelerator system that complements the company's X620 and e620 cards, which plug into PCI-X and PCIe slots. The CATS (ClearSpeed Accelerated Terrascale System) unit takes up 1U of rack space and can reach up to a Teraflop.

ClearSpeed manages to cram 12 of the e620 cards into the CATS box, leaving a system that should cost around $70,000. For the moment, ClearSpeed will sell the CATS units in limited volumes. The company hopes to see larger OEMs offer the product during 2008.

During a demonstration, ClearSpeed linked 12 of the CATS units with an HP ProLiant DL360 server. It then sent all 144 of its 96-core CSX600 chips at the quantum chemistry code Molpro and reached 11.6 Teraflops while consuming about 6.6kW of power (550 watts per CATS node).

(ClearSpeed hits this kind of performance on the back of its CSX600 chip, which can show 25 gigaflops of sustained 64-bit computing. Two of the chips sit on each e620 card.)

Most of ClearSpeed's critics whine about the amount of software work that needs to be done to port a floating point-heavy application over to these custom chips. ClearSpeed has worked hard to rebuff these claims.

Herding CATS

For one, it notes that a number of applications such as Matlab and Mathematica can run on the CSX600 chips without any changes to the underlying code thanks to work done by ClearSpeed and the software makers and the presence of friendly ClearSpeed libraries.

ClearSpeed also continues to work on its core software package, which is meant to ease the porting process for customers and partners. It has released a beta of Version 3.0 that includes support for Red Hat Enterprise Linux 5 64-bit and Suse Linux Enterprise Server 10 64-bit. In addition, customers will find support for a wider range of BLAS and LAPACK functions, new library functions and a preview of an Eclipse IDE.

Another nice feature in the ClearSpeed software is the presence of graphical tools that show spots in code which will benefit from acceleration.

It's easy for rivals to relegate ClearSpeed to the floating point niche and say that its hardware is a pain, but growing mainstream acceptance should make it tougher for end users to ignore the company. HP has folded ClearSpeed into its accelerator helper program, while Sun relies on ClearSpeed for some of its most prominent high performance computing wins.

ClearSpeed's story should get stronger in the coming months when it releases revamped silicon that improves overall performance and when it takes care of a lingering denormalized operands issue.

The Tesla Foil

Not to be outdone by some upstart, Nvidia has been hammering away at its accelerator play too.

While at Supercomputing, Nvidia found time to hype up Version 1.1 of CUDA and its Tesla acceleration systems.

CUDA (Compute Unified Device Architecture) is Nvidia's current answer to software issues. In total, the CUDA toolkit gives developers a C-compiler for GPUs, a runtime driver and FFT and BLAS libraries. With the fresh release of CUDA 1.1, Nvidia has added support for 64-bit Windows XP (broad Linux and 32-bit XP support was already there), while also declaring that it will include the CUDA driver with standard Nvidia display drivers.

Using the CUDA software, developers can tap into GPUs for speed-ups on a wide variety of applications, including those most near and dear to the high performance computing crowd's heart - stuff like Matlab or Monte-Carlo option pricing.

As Nvidia explains it,

Where previous generation GPUs were based on “streaming shader programs”, CUDA programmers use ‘C’ to create programs called kernels that use many threads to operate on large quantities of data in parallel. In contrast to multi-core CPUs, where only a few threads execute at the same time, NVIDIA GPUs featuring CUDA technology process thousands of threads simultaneously enabling high computational throughput across large amounts of data.

GPGPU, or "General-Purpose Computation on GPUs", has traditionally required the use of a graphics API such as OpenGL, which presents the wrong abstraction for general-purpose parallel computation. Therefore, traditional GPGPU applications are difficult to write, debug, and optimize. NVIDIA GPU Computing with CUDA enables direct implementation of parallel computations in the C language using an API designed for general-purpose computation.

CUDA received high praise at Supercomputing from Nvidia rivals and partners. Rival compliments usually serve as one of the surest signs that a given software package actually works as billed. Nvidia's Andy Keane, general manager of the GPU computing business, pointed us to several developers that ported their applications to an Nvidia GPU in a few hours. In many cases, these developers saw between 60 per cent and 150 per cent speed-ups with certain operations.

"We are not asking customers to take an entire application and run it on a GPU," Keane said. "We're looking for them to put suitable functions on a GPU. You're designing software to run on a GPU and designing it appropriately."

Some FPGA rivals to Nvidia knock GPUs for introducing performance and heat issues. DRC, who we'll get to later, claims that GPGPU performance will often top out at about a 10 per cent speed up on some applications because GPUs fail to handle loops well. "Any application with a dependency where it branches or loops back will have to exit the GPU and start over, which is where you get a huge performance penalty," DRC VP Clay Marr told us. In addition, GPUs tend to consume as much or a bit more power than standard CPUs, while FPGAs consume about 20 watts. Fill a cluster with GPU add-on cards, and you're talking about a hell of a lot of heat.

According to Keane, these performance claims are just plain untrue. Beyond that, it's FPGAs and not GPUs that are the real coding pain.

"With FPGAs, you are designing a chip," Keane said. "At the end of the design cycle, if something doesn't work, you have to go back to the start and redesign."

GPUs offer more flexibility from a coding standpoint and can keep up with customer changes, Keane said. A financial institution, for example, may make repeated tweaks to an algorithm and need to update its accelerator software for these alterations. This process happens at a much quicker pace with GPUs.

On the energy front, Nvidia thinks it tells a good enough story by matching x86 chips. You're seeing a major performance boost for certain operations while staying within the same power envelope. In addition, Nvidia can offer lower power GPUs if need be.

Nvidia's GPGPU story should improve next year when it matches ATI/AMD by rolling out double-precision hardware.

In the meantime, customers can check out Nvidia's various Tesla boards and systems. Those in search of the highest-end performance will want the four GPU Tesla S870 server, while developers might lean toward the two GPU D870 deskside supercomputer.

Just in case you're getting bored by our accelerator adventure, we'd like to offer you Verari's take on a booth babe as an intermission.

Always subtle - Verari presents the nice rack girls

Accelepizza

Companies like Nvidia needs partners like Acceleware.

Based in Calgary, Acceleware slaps its unique breed of software on Nvidia's Tesla systems. At the moment, it specializes in speeding up software used for electromagnetic simulations. We're talking about code used to improve products ranging from cell phones and antennas to microwaves.

Proving the relative maturity of its software, Acceleware claims some very impressive customers - Nokia, Samsung, Philips, Hitachi, Boston Scientific and Motorola to name a few.

Acceleware tries to remove the complexity associated with developing software for a GPU by supplying its own libraries and APIs to partners and customers. The company then works hand-in-hand with clients to bring their existing code to GPUs - a process that takes "one developer about one month." The end result can be up to a 35x boost in performance.

Boston Scientific tapped Acceleware to figure out how pacemakers will interact with MRI machines. "Acceleware combines its proprietary solution with Schmid & Partner Engineering AG's (SPEAG) SEMCAD X simulation software and NVIDIA GPU computing technology, enabling engineers at Boston Scientific to supercharge their simulations by a factor of up to 25x compared to a CPU," we're told.

Bored by pacemakers? Well, General Mills turned to Acceleware for help figuring out how pizzas will behave in the microwave. Is there anything finer than modeling the interaction of processed cheese and radiation at high speed?

Looking ahead, Acceleware plans to add more software aids for oil and gas customers and to do more work in the medical imaging field.

Acceleware CTO Ryan Schneider insists that the work needed to port code to a GPU is not as daunting as it sounds.

"We're not saying, 'Here is a development kit. Everyone can do it.'" he told us. "We're going into a vertical and learning the algorithms and applications. It's a different approach from some of the other companies.

"That makes it sound like we're doing custom development work all the time, but there are core algorithms that everyone uses in these spaces. We take the work and get re-use."

Like ClearSpeed, Acceleware is part of HP's accelerator program. The company also has a relationship with Sun and plans to announce a partnership with Dell in the near future.

And now we head back to FPGA country.

We've followed Silicon Valley start-up DRC since it first appeared in early 2006.

DRC takes Xilinx FPGAs (field programmable gate arrays) and drops them right in Opteron processor sockets. This gives customers who are willing to do some coding a work a chance to get anywhere from 10x to 60x better performance out of the Opteron socket while consuming just a fraction of the power (10-25 watts).

At the moment, you can only slot DRC's RPUs (reconfigurable processor units) into older Rev E sockets. But support for the most current Opteron boards "is right around the corner," according to CEO Larry Laurich.

From a performance per watt perspective, it's hard not to appreciate DRC's attack. FPGAs have a clear edge over power-hungry GPUs and give customers a real chance to reduce the energy needs of a cluster.

Supercomputing giant Cray has bought into this pitch, offering DRC-based modules as one of its accelerator options in the new XT5 systems.

Like all of the accelerator players, DRC is working to make its software story as strong as possible. So far, it has focused on the financial, oil and gas and bio-tech markets. DRC offers these customers a development platform and also helps with custom development. In time, DRC wants to offer a broad set of commonly used routines and algorithms to customers, reducing the amount of bespoke work that needs to take place.

Cray's flashy new XT5

"We can give them starting places that are way beyond what they would be able to do on their own and get them going in a short period of time," Laurich said.

In addition to the GPU set, DRC competes fellow start-up XtremeData, which sells a very similar product based on Altera's FPGAs.

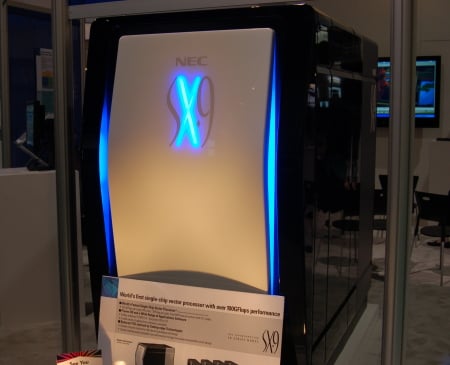

For good measure: NEC's new vector machine

DRC has long claimed the intellectual property rights behind the technology needed to run RPUs in Opteron sockets, but Laurich said there are no current plans to flog a lawsuit at XtremeData.

"We haven't run into any situation where we were competing with them and lost business," he said. "The market looks big enough for both of us, and we're both going as fast as we can."

DRC expects to be ready with a similar product once Intel ships its next-generation processors in 2008 that include integrated memory controllers and a high-speed interconnect - like today's Opterons.

Okay, okay. That's enough of the start-ups and fledgling efforts. Let's check out where the big boys stand with all this technology.

As mentioned, HP seems to have the most concrete programs in place to make sure these accelerator products reach customers as quickly as possible. HP has zeroed in on improving the development of multi-threaded code for both multi-core x86 chips and this more specialized silicon. In addition, it's funding and helping market some of these young, accelerator companies.

We fully expect to see HP add FPGA products from either DRC or XtremeData or both to its arsenal in 2008, complementing existing support for products from ClearSpeed and Nvidia's boxes sold through Acceleware.

As we see it, Sun follows HP in the accelerator game. Sun has done some nice work with ClearSpeed on mega-clusters and appears dialed into the work being done by Acceleware and others. The server maker has yet to develop formal programs in line with HP, but it usually takes Sun a bit longer than its rivals to polish off the marketing bits and bobs.

IBM too seems interested in all of this accelerator madness, although we can't recall one conversation with the vendor about such products. Evidence of IBM's interest mostly comes through information available on the web where you can find IBM adding products such as ClearSpeed to its pre-packaged clusters.

In all honesty, however, IBM talks to us the least of all the major - and minor - vendors, so this may just be a case of radio silence rather than disinterest on IBM's part.

Or perhaps IBM is so focused on Cell's accelerator potential that it doesn't care to promote anything else.

Reno airport: "Now as crappy as Reno"

As always, Dell is bringing up the rear with its cautious approach. The company will no doubt spring into action when one or all of these products really take off.

And then there's big pappa Intel which is playing a bit of catch-up in this market.

A number of the accelerator start-ups are waiting for Intel to sort out its upcoming QuickPath interconnect technology before plugging their wares right into Xeon sockets. In addition, developers are waiting, waiting and waiting for Intel to give some glimpse of its own accelerator known as Larrabee.

Nvidia's Keane told us that "Larrabee is whatever Intel wants it to be at this point," and he's right. Intel has failed to reveal anything concrete about the product at all other than to say that it will have many cores and rely on the x86 instruction set.

You can bet, however, that Intel will eventually push the product - due to arrive sometime this decade - as an easy to program accelerator.

Codetacular 2008

At this time last year, we were very, very skeptical about the whole acceleration hype stream.

Friends in the high performance computing arena kept emphasizing how hard it is to develop code for all of these products be they FPGAs, GPUs or fancy IDE wrapped silicon. We were told time and again that the world simply does not have enough strong coders to deal with these products.

While the coding issues remain immense, we're now far more encouraged about the prospect of accelerators playing a meaningful role in data centers of various sizes.

The slow moving software industry - Microsoft, we're looking at you - has finally come around to the realities posed by multi-core chips from Intel and AMD. As a result, we find a significant, mainstream effort underway to craft multi-threaded software and to fund start-ups that can help with the parallelization push.

The accelerator set will benefit from this momentum, as many of the techniques needed to spread software across x86 cores carry over to multi-core, specialized silicon.

In addition, high performance computing systems continue to account for larger and larger chunks of server sales. This leads to the large vendors catering to HPC customers' needs, and what the HPC folks want more than anything is to run software as fast as possible in the hopes of gaining an edge over competitors.

During 2008, all of the major acceleration plays should have reached the point where they're viable options on a Tier 1 server vendor's price list.

We suspect this will result in a large number of new customer trials around the accelerator technology, which is both good and bad for the hype-filled vendors.

So far, the accelerator upstarts have been able to hand pick their best test cases - customers that ported their code over to a custom chip with no work at all or that saw 200x speed ups. As more customers try out this hardware, reality will start to undermine these shining examples.

Thankfully, that should help us all gauge how promising FPGAs and GPUs are as potential mainstream products. ®